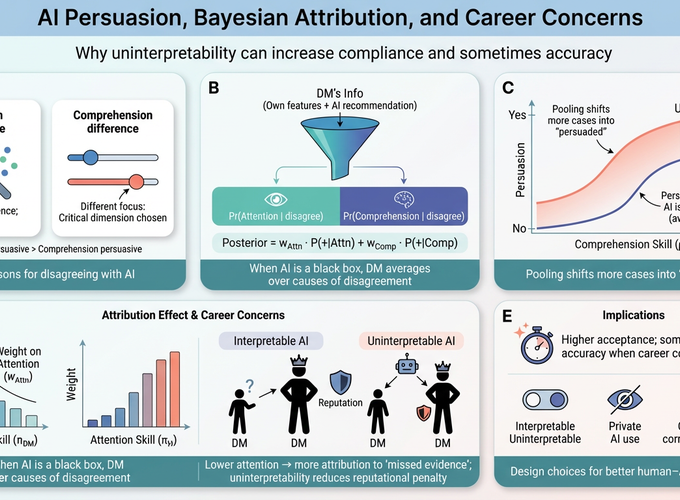

This paper studies AI persuasion by distinguishing between two reasons for disagreement: attention differences, where the AI detects features the decision-maker missed, and comprehension differences, where the AI and the decision-maker interpret observed features differently. We show that AI is more effective in persuading the decision-maker when the disagreement is due to attention differences rather than comprehension differences. We also show that the AI’s interpretability shapes how the decision-maker attributes the sources of disagreement and, in turn, whether they follow the AI’s recommendation. Our main result is that making AI uninterpretable can actually enhance persuasion and, in the presence of career concerns, improve decision accuracy.